Just want to clarify, this is not my Substack, I’m just sharing this because I found it insightful.

The author describes himself as a “fractional CTO”(no clue what that means, don’t ask me) and advisor. His clients asked him how they could leverage AI. He decided to experience it for himself. From the author(emphasis mine):

I forced myself to use Claude Code exclusively to build a product. Three months. Not a single line of code written by me. I wanted to experience what my clients were considering—100% AI adoption. I needed to know firsthand why that 95% failure rate exists.

I got the product launched. It worked. I was proud of what I’d created. Then came the moment that validated every concern in that MIT study: I needed to make a small change and realized I wasn’t confident I could do it. My own product, built under my direction, and I’d lost confidence in my ability to modify it.

Now when clients ask me about AI adoption, I can tell them exactly what 100% looks like: it looks like failure. Not immediate failure—that’s the trap. Initial metrics look great. You ship faster. You feel productive. Then three months later, you realize nobody actually understands what you’ve built.

Not immediate failure—that’s the trap. Initial metrics look great. You ship faster. You feel productive.

And all they’ll hear is “not failure, metrics great, ship faster, productive” and go against your advice because who cares about three months later, that’s next quarter, line must go up now. I also found this bit funny:

I forced myself to use Claude Code exclusively to build a product. Three months. Not a single line of code written by me… I was proud of what I’d created.

Well you didn’t create it, you said so yourself, not sure why you’d be proud, it’s almost like the conclusion should’ve been blindingly obvious right there.

The top comment on the article points that out.

It’s an example of a far older phenomenon: Once you automate something, the corresponding skill set and experience atrophy. It’s a problem that predates LLMs by quite a bit. If the only experience gained is with the automated system, the skills are never acquired. I’ll have to find it but there’s a story about a modern fighter jet pilot not being able to handle a WWII era Lancaster bomber. They don’t know how to do the stuff that modern warplanes do automatically.

It’s more like the ancient phenomenon of spaghetti code. You can throw enough code at something until it works, but the moment you need to make a non-trivial change, you’re doomed. You might as well throw away the entire code base and start over.

And if you want an exact parallel, I’ve said this from the beginning, but LLM coding at this point is the same as offshore coding was 20 years ago. You make a request, get a product that seems to work, but maintaining it, even by the same people who created it in the first place, is almost impossible.

Indeed… Throw-away code is currently where AI coding excels. And that is cool and useful - creating one off scripts, self-contained modules automating boilerplate, etc.

You can’t quite use it the same way for complex existing code bases though… Not yet, at least…

Yes, that exactly how I use cursor and local llms. There a ton of cases, where you need one time script to prepare data/sort thru data/fetch data via API, etc. Even something simple like adding role on discord channel (god save you, if your company uses that piece of crap for communication), that can be done with script too, especially if you need to add role to thousands of users, for example. Of course, it can be done properly by normal development cycle, but that expensive, while shitcoding thru cursor can be done by anyone.

I agree with you, though proponents will tell you that’s by design. Supposedly, it’s like with high-level languages. You don’t need to know the actual instructions in assembly anymore to write a program with them. I think the difference is that high-level language instructions are still (mostly) deterministic, while an LLM prompt certaily isn’t.

Yep, thats the key issue that so many people fail to understand. They want AI to be deterministic but it simply isnt. Its like expecting a human to get the right answer to any possible question, its just not going to happen. The only thing we can do is bring error rates with ai lower than a human doing the same task, and it will be at that point that the ai becomes useful. But even at that point there will always be the alignment issue and nondeterminism, meaning ai will never behave exactly the way we want or expect it to.

The thing about this perspective is that I think its actually overly positive about LLMs, as it frames them as just the latest in a long line of automations.

Not all automations are created equal. For example, compare using a typewriter to using a text editor. Besides a few details about the ink ribbon and movement mechanisms you really haven’t lost much in the transition. This is despite the fact that the text editor can be highly automated with scripts and hot keys, allowing you to manipulate even thousands of pages of text at once in certain ways. Using a text editor certainly won’t make you forget how to write like using ChatGPT will.

I think the difference lies in the relationship between the person and the machine. To paraphrase Cathode Ray Dude, people who are good at using computers deduce the internal state of the machine, mirror (a subset of) that state as a mental model, and use that to plan out their actions to get the desired result. People that aren’t good at using computers generally don’t do this, and might not even know how you would start trying to.

For years ‘user friendly’ software design has catered to that second group, as they are both the largest contingent of users and the ones that needed the most help. To do this software vendors have generally done two things: try to move the necessary mental processes from the user’s brain into the computer and hide the computer’s internal state (so that its not implied that the user has to understand it, so that a user that doesn’t know what they’re doing won’t do something they’ll regret, etc). Unfortunately this drives that first group of people up the wall. Not only does hiding the internal state of the computer make it harder to deduce, every “smart” feature they add to try to move this mental process into the computer itself only makes the internal state more complex and harder to model.

Many people assume that if this is the way you think about software you are just an elistist gatekeeper, and you only want your group to be able to use computers. Or you might even be accused of ableism. But the real reason is what I described above, even if its not usually articulated in that way.

Now, I am of the opinion that the ‘mirroring the internal state’ method of thinking is the superior way to interact with machines, and the approach to user friendliness I described has actually done a lot of harm to our relationship with computers at a societal level. (This is an opinion I suspect many people here would agree with.) And yet that does not mean that I think computers should be difficult to use. Quite the opposite, I think that modern computers are too complicated, and that in an ideal world their internal states and abstractions would be much simpler and more elegant, but no less powerful. (Elaborating on that would make this comment even longer though.) Nor do I think that computers shouldn’t be accessible to people with different levels of ability. But just as a random person in a store shouldn’t grab a wheelchair user’s chair handles and start pushing them around, neither should Windows (for example) start changing your settings on updates without asking.

Anyway, all of this is to say that I think LLMs are basically the ultimate in that approach to ‘user friendliness’. They try to move more of your thought process into the machine than ever before, their internal state is more complex than ever before, and it is also more opaque than ever before. They also reflect certain values endemic to the corporate system that produced them: that the appearance of activity is more important than the correctness or efficacy of that activity. (That is, again, a whole other comment though.) The result is that they are extremely mind numbing, in the literal sense of the phrase.

Once you automate something, the corresponding skill set and experience atrophy. It’s a problem that predates LLMs by quite a bit. If the only experience gained is with the automated system, the skills are never acquired.

Well, to be fair, different skills are acquired. You’ve learned how to create automated systems, that’s definitely a skill. In one of my IT jobs there were a lot of people who did things manually, updated computers, installed software one machine at a time. But when someone figures out how to automate that, push the update to all machines in the room simultaneously, that’s valuable and not everyone in that department knew how to do it.

So yeah, I guess my point is, you can forget how to do things the old way, but that’s not always bad. Like, so you don’t really know how to use a scythe, that’s fine if you have a tractor, and trust me, you aren’t missing much.

I forced myself to use Claude Code exclusively to build a product. Three months. Not a single line of code written by me… I was proud of what I’d created.

Well you didn’t create it, you said so yourself, not sure why you’d be proud, it’s almost like the conclusion should’ve been blindingly obvious right there.

Does a director create the movie? They don’t usually edit it, they don’t have to act in it, nor do all directors write movies. Yet the person giving directions is seen as the author.

The idea is that vibe coding is like being a director or architect. I mean that’s the idea. In reality it seems it doesn’t really pan out.

You can vibe write and vibe edit a movie now too. They also turn out shit.

The issue is that llm isnt a person with skills and knowledge. Its a complex guessing box that gets thing kinda right, but not actually right, and it absolutely cant tell whats right or not. It has no actual skills or experience or humainty that a director can expect a writer or editor to have.

What’s impressive about LLM is how good it is at sounding right.

Just makes me think of this character from Adventure Time

What season they from? I thought I’d seen most of it but don’t recall them

This is from season 1 episode 18, titled “Dungeon”

Wrong, it’s just outsourcing.

You’re making a false-equivalence. A director is actively doing their job; they’re a puppeteer and the rest is their puppet. The puppeteer is not outsourcing his job to a puppet.

And I’m pretty sure you don’t know what architects do.

If I hire a coder to write an app for me, whether it’s a clanker or a living being, I’m outsourcing the work; I’m a manager.

It’s like tasking an artist to write a poem for you about love and flowers, and being proud about that poem.

yeah i don’t get why the ai can’t do the changes

don’t you just feed it all the code and tell it? i thought that was the point of 100% AI

We’re about to face a crisis nobody’s talking about. In 10 years, who’s going to mentor the next generation? The developers who’ve been using AI since day one won’t have the architectural understanding to teach. The product managers who’ve always relied on AI for decisions won’t have the judgment to pass on. The leaders who’ve abdicated to algorithms won’t have the wisdom to share.

Except we are talking about that, and the tech bro response is “in 10 years we’ll have AGI and it will do all these things all the time permanently.” In their roadmap, there won’t be a next generation of software developers, product managers, or mid-level leaders, because AGI will do all those things faster and better than humans. There will just be CEOs, the capital they control, and AI.

What’s most absurd is that, if that were all true, that would lead to a crisis much larger than just a generational knowledge problem in a specific industry. It would cut regular workers entirely out of the economy, and regular workers form the foundation of the economy, so the entire economy would collapse.

“Yes, the planet got destroyed. But for a beautiful moment in time we created a lot of value for shareholders.”

That’s why they’re all-in on authoritarianism.

Yep, and now you know why all the tech companies suddenly became VERY politically active. This future isn’t compatible with democracy. Once these companies no longer provide employment their benefit to society becomes a big fat question mark.

According to a study, the

lowertop 10% accounts for something like 68% of cash flow in the economy. Us plebs are being cut out all together.That being said, I think if people can’t afford to eat, things might bet bad. We will probably end up a kept population in these ghouls fever dreams.

Edit: I’m an idiot.

Once Boston Dynamic style dogs and Androids can operate over a number of days independently, I’d say all bets are off that we would be kept around as pets.

I’m fairly certain your Musks and Altmans would be content with a much smaller human population existing to only maintain their little bubble and damn everything else.

Edit: I’m an idiot.

Same here. Nobody knows what the eff they are doing. Especially the people in charge. Much of life is us believing confident people who talk a good game but dont know wtf they are doing and really shouldnt be allowed to make even basic decisions outside a very narrow range of competence.

We have an illusion of broad meritocracy and accountability in life but its mostly just not there.

What does lower top 10% mean?

Also, even if we make it through a wave of bullshit and all these companies fail in 10 years, the next wave will be ready and waiting, spouting the same crap - until it’s actually true (or close enough to be bearable financially). We can’t wait any longer to get this shit under control.

So there’s actual developers who could tell you from the start that LLMs are useless for coding, and then there’s this moron & similar people who first have to fuck up an ecosystem before believing the obvious. Thanks fuckhead for driving RAM prices through the ceiling… And for wasting energy and water.

I can least kinda appreciate this guy’s approach. If we assume that AI is a magic bullet, then it’s not crazy to assume we, the existing programmers, would resist it just to save our own jobs. Or we’d complain because it doesn’t do things our way, but we’re the old way and this is the new way. So maybe we’re just being whiny and can be ignored.

So he tested it to see for himself, and what he found was that he agreed with us, that it’s not worth it.

Ignoring experts is annoying, but doing some of your own science and getting first-hand experience isn’t always a bad idea.

And not only did he see for himself, he wrote up and published his results.

Yup. This was almost science. It’s just lacking measurements and repeatablity.

100% this. The guy was literally a consultant and a developer. It’d just be bad business for him to outright dismiss AI without having actual hands on experience with said product. Clients want that type of experience and knowledge when paying a business to give them advice and develop a product for them.

Except that outright dismissing snake oil would not at all be bad business. Calling a turd a diamond neither makes it sparkle, nor does it get rid of the stink.

I can’t just call everything snake oil without some actual measurements and tests.

Naive cynicism is just as naive as blind optimism

I can’t just call everything snake oil without some actual measurements and tests.

With all due respect, you have not understood the basic mechanic of machine learning and the consequences thereof.

With due respect, you have not understood how snake oil is detected.

Problem is that statistical word prediction has fuck-all to do with AI. It’s not and will never be. By “giving it a try” you contribute to the spread of this snake oil. And even if someone came up with actual AI, if it used enough resources to impact our ecosystem, instead of being a net positive, and if it was in the greedy hands of billionaires, then using it is equivalent to selling your executioner an axe.

Terrible take. Thanks for playing.

It’s actually impressive the level of downvotes you’ve gathered in what is generally a pretty anti-ai crowd.

They are useful for doing the kind of boilerplate boring stuff that any good dev should have largely optimized and automated already. If it’s 1) dead simple and 2) extremely common, then yeah an LLM can code for you, but ask yourself why you don’t have a time-saving solution for those common tasks already in place? As with anything LLM, it’s decent at replicating how humans in general have responded to a given problem, if the problem is not too complex and not too rare, and not much else.

Thats exactly what I so often find myself saying when people show off some neat thing that a code bot “wrote” for them in x minutes after only y minutes of “prompt engineering”. I’ll say, yeah I could also do that in y minutes of (bash scripting/vim macroing/system architecting/whatever), but the difference is that afterwards I have a reusable solution that: I understand, is automated, is robust, and didn’t consume a ton of resources. And as a bonus I got marginally better as a developer.

Its funny that if you stick them in an RPG and give them an ability to “kill any level 1-x enemy instantly, but don’t gain any xp for it” they’d all see it as the trap it is, but can’t see how that’s what AI so often is.

As you said, “boilerplate” code can be script generated - and there are IDEs that already do this, but in a deterministic way, so that you don’t have to proof-read every single line to avoid catastrophic security or crash flaws.

And then there are actual good developers who could or would tell you that LLMs can be useful for coding, in the right context and if used intelligently. No harm, for example, in having LLMs build out some of your more mundane code like unit/integration tests, have it help you update your deployment pipeline, generate boilerplate code that’s not already covered by your framework, etc. That it’s not able to completely write 100% of your codebase perfectly from the get-go does not mean it’s entirely useless.

Other than that it’s work that junior coders could be doing, to develop the next generation of actual good developers.

Yes, and that’s exactly what everyone forgets about automating cognitive work. Knowledge or skill needs to be intergenerational or we lose it.

If you have no junior developers, who will turn into senior developers later on?

If you have no junior developers, who will turn into senior developers later on?

At least it isn’t my problem. As long as I have CrowdStrike, Cloudflare, Windows11, AWS us-east-1 and log4j… I can just keep enjoying today’s version of the Internet, unchanged.

AI, duh.

Al is a pretty good guy but he can’t be everywhere. Maybe he can use some A.I. to help!

If it’s boilerplate, copy/paste; find/replace works just as well without needing data centers in the desert to develop.

And then there are actual good developers who could or would tell you that LLMs can be useful for coding

The only people who believe that are managers and bad developers.

You’re wrong, whether you figure that out now or later. Using an LLM where you gatekeep every write is something that good developers have started doing. The most senior engineers I work with are the ones who have adopted the most AI into their workflow, and with the most care. There’s a difference between vibe coding and responsible use.

There’s a difference between vibe coding and responsible use.

There’s also a difference between the occasional evening getting drunk and alcoholism. That doesn’t make an occasional event healthy, nor does it mean you are qualified to drive a car in that state.

People who use LLMs in production code are - by definition - not “good developers”. Because:

- a good developer has a clear grasp on every single instruction in the code - and critically reviewing code generated by someone else is more effort than writing it yourself

- pushing code to production without critical review is grossly negligent and compromises data & security

This already means the net gain with use of LLMs is negative. Can you use it to quickly push out some production code & impress your manager? Possibly. Will it be efficient? It might be. Will it be bug-free and secure? You’ll never know until shit hits the fan.

Also: using LLMs to generate code, a dev will likely be violating copyrights of open source left and right, effectively copy-pasting licensed code from other people without attributing authorship, i.e. they exhibit parasitic behavior & outright violate laws. Furthermore the stuff that applies to all users of LLMs applies:

- they contribute to the hype, fucking up our planet, causing brain rot and skill loss on average, and pumping hardware prices to insane heights.

We have substantially similar opinions, actually. I agree on your points of good developers having a clear grasp over all of their code, ethical issues around AI (not least of which are licensing issues), skill loss, hardware prices, etc.

However, what I have observed in practice is different from the way you describe LLM use. I have seen irresponsible use, and I have seen what I personally consider to be responsible use. Responsible use involves taking a measured and intentional approach to incorporating LLMs into your workflow. It’s a complex topic with a lot of nuance, like all engineering, but I would be happy to share some details.

Critical review is the key sticking point. Junior developers also write crappy code that requires intense scrutiny. It’s not impossible (or irresponsible) to use code written by a junior in production, for the same reason. For a “good developer,” many of the quality problems are mitigated by putting roadblocks in place to…

- force close attention to edits as they are being written,

- facilitate handholding and constant instruction while the model is making decisions, and

- ensure thorough review at the time of design/writing/conclusion of the change.

When it comes to making safe and correct changes via LLM, specifically, I have seen plenty of “good developers” in real life, now, who have engineered their workflows to use AI cautiously like this.

Again, though, I share many of your concerns. I just think there’s nuance here and it’s not black and white/all or nothing.

While I appreciate your differentiated opinion, I strongly disagree. As long as there is no actual AI involved (and considering that humanity is dumb enough to throw hundreds of billions at a gigantic parrot, I doubt we would stand a chance to develop true AI, even if it was possible to create), the output has no reasoning behind it.

- it violates licenses and denies authorship and - if everyone was indeed equal before the law, this alone would disqualify the code output from such a model because it’s simply illegal to use code in violation of license restrictions & stripped of licensing / authorship information

- there is no point. Developing code is 95-99% solving the problem in your mind, and 1-5% actual code writing. You can’t have an algorithm do the writing for you and then skip on the thinking part. And if you do the thinking part anyways, you have gained nothing.

A good developer has zero need for non-deterministic tools.

As for potential use in brainstorming ideas / looking at potential solutions: that’s what the usenet was good for, before those very corporations fucked it up for everyone, who are now force-feeding everyone the snake oil that they pretend to have any semblance of intelligence.

violates licenses

Not a problem if you believe all code should be free. Being cheeky but this has nothing to do with code quality, despite being true

do the thinking

This argument can be used equally well in favor of AI assistance, and it’s already covered by my previous reply

non-deterministic

It’s deterministic

brainstorming

This is not what a “good developer” uses it for

You’re pushing code to prod without pr’s and code reviews? What kind of jank-ass cowboy shop are you running?

It doesn’t matter if an llm or a human wrote it, it needs peer review, unit tests and go through QA before it gets anywhere near production.

Maybe they’ll listen to one of their own?

The kind of useful article I would expect then is one exlaining why word prediction != AI

Don’t worry. The people on LinkedIn and tech executives tell us it will transform everything soon!

I really have not found AI to be useless for coding. I have found it extremely useful and it has saved me hundreds of hours. It is not without its faults or frustrations, but the it really is a tool I would not want to be without.

That’s because you are not a proper developer, as proven by your comment. And you create tech legacy that will have a net cost in terms of maintenance or downtime.

I am for sure not a coder as it has never been my strong suite, but I am without a doubt an awesome developer or I would not have a top rated multiplayer VR app that is pushing the boundaries of what mobile VR can do.

The only person who will have to look at my code is me so any and all issues be it my code or AI code will be my burden and AI has really made that burden much less. In fact, I recently installed Coplay in my Unity Engine Editor and OMG it is amazing at assisting not just with code, but even finding little issues with scene setup, shaders, animations and more. I am really blown away with it. It has allowed me to spend even less time on the code and more time imagineering amazing experiences which is what fans of the app care about the most. They couldn’t care less if I wrote the code or AI did as long as it works and does not break immersion. Is that not what it is all about at the end of the day?

As long as AI helps you achieve your goals and your goals are grounded, including maintainability, I see no issues. Yeah, misdirected use of AI can lead to hard to maintain code down the line, but that is why you need a human developer in the loop to ensure the overall architecture and design make sense. Any code base can become hard to maintain if not thought through be is human or AI written.

Look, bless your heart if you have a successful app, but success / sales is not exclusive to products of quality. Just look around at all the slop that people buy nowadays.

As long as AI helps you achieve your goals and your goals are grounded, including maintainability, I see no issues.

Two issues with that

- what you are using has nothing whatsoever to do with AI, it’s a glorified pattern repeater - an actual parrot has more intelligence

- if the destruction of entire ecosystems for slop is not an issue that you see, you should not be allowed anywhere near technology (as by now probably billions of people)

I do not understand your point you are making about my particular situation as I am not making slop. Plus one persons slop is another’s treasure. What exactly are you suggesting as the 2 issues you outlined see like they are being directed to someone else perhaps?

- I am calling it AI as that is what it is called, but you are correct, it is a pattern predictor

- I am not creating slop but something deeply immersive and enjoyed by people. In terms of the energy used, I am on solar and run local LLMs.

I didn’t say your particular application that I know nothing about is slop, I said success does not mean quality. And if you use statistical pattern generation to save time, chances are high that your software is not of good quality.

Even solar energy is not harvested waste-free (chemical energy and production of cells). Nevertheless, even if it were, you are still contributing to the spread of slop and harming other people. Both through spreading acceptance of a technology used to harm billions of people for the benefit of a few, and through energy and resource waste.

I am sure my code could be better. I am also sure the SDKs I use could be better and the gam engine could’ve better. For what I need, they all work good enough to get the job done. I am sure issues will come up as a result as it has many times in the past already, even before LLMs helped, but that is par for the course for a developer to tackle.

The developers can’t debug code they didn’t write.

This is a bit of a stretch.

agreed. 50% of my job is debugging code I didn’t write.

I mean I was trying to solve a problem t’other day (hobbyist) - it told me to create a

function foo(bar): await object.foo(bar)

then in object

function foo(bar): _foo(bar)

function _foo(bar): original_object.foo(bar)

like literally passing a variable between three wrapper functions in two objects that did nothing except pass the variable back to the original function in an infinite loop

add some layers and complexity and it’d be very easy to get lost

The few times I’ve used LLMs for coding help, usually because I’m curious if they’ve gotten better, they let me down. Last time it was insistent that its solution would work as expected. When I gave it an example that wouldn’t work, it even broke down each step of the function giving me the value of its variables at each step to demonstrate that it worked… but at the step where it had fucked up, it swapped the value in the variable to one that would make the final answer correct. It made me wonder how much water and energy it cost me to be gaslit into a bad solution.

How do people vibe code with this shit?

As a learning process it’s absolutely fine.

You make a mess, you suffer, you debug, you learn.

But you don’t call yourself a developer (at least I hope) on your CV.

Vibe coders can’t debug code because they didn’t write

Vibe coders can’t debug code because they can’t write code

Yes, this is what I intended to write but I submitted it hastily.

Its like a catch-22, they can’t write code so they vibecode, but to maintain vibed code you would necessarily need to write code to understand what’s actually happening

I don’t get this argument. Isn’t the whole point that the ai will debug and implement small changes too?

Think an interior designer having to reengineer the columns and load bearing walls of a masonry construction.

What are the proportions of cement and gravel for the mortar? What type of bricks to use? Do they comply with the PSI requirements? What caliber should the rebars be? What considerations for the pouring of concrete? Where to put the columns? What thickness? Will the building fall?

“I don’t know that shit, I only design the color and texture of the walls!”

And that, my friends, is why vibe coding fails.

And it’s even worse: Because there are things you can more or less guess and research. The really bad part is the things you should know about but don’t even know they are a thing!

Unknown unknowns: Thread synchronization, ACID transactions, resiliency patterns. That’s the REALLY SCARY part. Write code? Okay, sure, let’s give the AI a chance. Write stable, resilient code with fault tolerance, and EASY TO MAINTAIN? Nope. You’re fucked. Now the engineers are gone and the newbies are in charge of fixing bad code built by an alien intelligence that didn’t do its own homework and it’s easier to rewrite everything from scratch.

If you need to refractor your program you might aswell start from the beginning

Some can’t because they never acquired to skill to read code. But most did and can.

If you’ve never had to debug code. Are you really a developer?

There is zero chance you have never written a big so… Who is fixing them?

Unless you just leave them because you work for Infosys or worse but then I ask again - are you really a developer?

I think it highly depends on the skill and experience of the dev. A lot of the people flocking into the vibe coding hype are not necessarily always people who know how about coding practices (including code review etc …) nor are experienced in directing AI agent to achieve such goals. The result is MIT prediction. Although, this will start to change soon.

Great article, brave and correct. Good luck getting the same leaders who blindly believe in a magical trend for this or next quarters numbers; they don’t care about things a year away let alone 10.

I work in HR and was stuck by the parallel between management jobs being gutted by major corps starting in the 80s and 90s during “downsizing” who either never replaced them or offshore them. They had the Big 4 telling them it was the future of business. Know who is now providing consultation to them on why they have poor ops, processes, high turnover, etc? Take $ on the way in, and the way out. AI is just the next in long line of smart people pretending they know your business while you abdicate knowing your business or employees.

Hope leaders can be a bit braver and wiser this go 'round so we don’t get to a cliffs edge in software.

Tbh I think the true leaders are high on coke.

Wow I didn’t know that I was leading this whole time.

Exactly. The problem isn’t moving part of production to some other facility or buying a part that you used to make in-house. It’s abdicating an entire process that you need to be involved in if you’re going to stay on top of the game long-term.

Claude Code is awesome but if you let it do even 30% of the things it offers to do, then it’s not going to be your code in the end.

I’m trying

Much appreciated 🫡

Something any (real, trained, educated) developer who has even touched AI in their career could have told you. Without a 3 month study.

What’s funny is this guy has 25 years of experience as a software developer. But three months was all it took to make it worthless. He also said it was harder than if he’d just wrote the code himself. Claude would make a mistake, he would correct it. Claude would make the same mistake again, having learned nothing, and he’d fix it again. Constant firefighting, he called it.

As someone who has been shoved in the direction of using AI for coding by my superiors, that’s been my experience as well. It’s fine at cranking out stackoverflow-level code regurgitation and mostly connecting things in a sane way if the concept is simple enough. The real breakthrough would be if the corrections you make would persist longer than a turn or two. As soon as your “fix-it prompt” is out of the context window, you’re effectively back to square one. If you’re expecting it to “learn” you’re gonna have a bad time. If you’re not constantly double checking its output, you’re gonna have a bad time.

@felbane @AutistoMephisto i don’t have a cs degree (and am more than willing to accept the conclusions of this piece) but how is it not viable to audit code as it’s produced so as it’s both vetted and understood in sequence?

Auditing the code it produces is basically the only effective way to use coding LLMs at this point.

You’re basically playing the role of senior dev code reviewing and editing a junior dev’s code, except in this case the junior dev randomly writes an amalgamation of mostly valid, extremely wonky, and/or complete bullshit code. It has no concept of best practices, or fitness for purpose, or anything you’d expect a junior dev to learn as they gain experience.

Now given the above, you might ask yourself: “Self, what if I myself don’t have the skills or experience of a senior dev?” This is where vibe coding gets sketchy or downright dangerous: if you don’t notice the problems in generated code, you’re doomed to fail sooner or later. If you’re lucky, you end up having to do a big refactoring when you realize the code is brittle. If you’re unlucky, your backend is compromised and your CTO is having to decide whether to pay off the ransomware demands or just take a chance on restoring the latest backup.

If you’re just trying to slap together a quick and dirty proof of concept or bang out a one-shot script to accomplish a task, it’s fairly useful. If you’re trying to implement anything moderately complex or that you intend to support for months/years, you’re better off just writing it yourself as you’ll end up with something stylistically cohesive and more easily maintainable.

@felbane thanks, such a thorough response really appreciate your time.

It’s still useful to have an actual “study” (I’d rather call it a POC) with hard data you can point to, rather than just “trust me bro”.

Like the MIT study that the author refers to? The one that already existed before they decided they need to do it themself?

I was in charge of an AI pilot project two years back at my company. That was my conclusion, among others.

Also, what MIT also told them. Literally MIT lol

Untrained dev here, but the trend I’m seeing is spec-driven development where AI generates the specs with a human, then implements the specs. Humans can modify the specs, and AI can modify the implementation.

This approach seems like it can get us to 99%, maybe.

Trained dev with a decade of professional experience, humans routinely fail to get me workable specs without hours of back and forth discussion. I’d say a solid 25% of my work week is spent understanding what the stakeholders are asking for and how to contort the requirements to fit into the system.

If these humans can’t be explict enough with me, a living thinking human that understands my architecture better than any LLM, what chance does an LLM have at interpreting them?

Thus you get a piece of software that no one really knows shit about the inner workings of. Sure you have a bunch of spec sheets but no one was there doing the grunt work so when something inevitably breaks during production there’s no one on the team saying “oh, that might be related to this system I set up over here.”

How is what you’re describing different to what the author is talking about? Isn’t it essentially the same as “AI do this thing for me”, “no not like that”, “ok that’s better”? The trouble the author describes, ie the solution being difficult to change, or having no confidence that it can be safely changed, is still the same.

This poster https://calckey.world/notes/afzolhb0xk is more articulate than my post.

The difference between this “spec-driven” approach is that the entire process is repeatable by AI once you’ve gotten the spec sorted. So you no longer work on the code, you just work on the spec, which can be a collection of files, files in folders, whatever — but the goal is some kind of determinism, I think.

I use it on a much smaller scale and haven’t really cared much for the “spec as truth” approach myself, at this level. I also work almost exclusively on NextJS apps with the usual Tailwind + etc stack. I would certainly not trust a developer without experience with that stack to generate “correct” code from an AI, but it’s sort of remarkable how I can slowly document the patterns of my own codebase and just auto-include it as context on every prompt (or however Cursor does it) so that everything the LLMs suggest gets LLM-reviewed against my human-written “specs”. And doubly neat is that the resulting documentation of patterns turns out to be really helpful to developers who join or inherit the codebase.

I think the author / developer in the article might not have been experienced enough to direct the LLMs to build good stuff, but these tools like React, NextJS, Tailwind, and so on are all about patterns that make us all build better stuff. The LLMs are like “8 year olds” (someone else in this thread) except now they’re more like somewhat insightful 14 year olds, and where they’ll be in another 5 years… Who knows.

Anyway, just saying. They’re here to stay, and they’re going to get much better.

They’re here to stay

Eh, probably. At least for as long as there is corporate will to shove them down the rest of our throats. But right now, in terms of sheer numbers, humans still rule, and LLMs are pissing off more and more of us every day while their makers are finding it increasingly harder to forge ahead in spite of us, which they are having to do ever more frequently.

and they’re going to get much better.

They’re already getting so much worse, with what is essentially the digital equivalent of kuru, that I’d be willing to bet they’ve already jumped the shark.

If their makers and funders had been patient, and worked the present nightmares out privately, they’d have a far better chance than they do right now, IMO.

Simply put, LLMs/“AI” were released far too soon, and with far too much “I Have a Dream!” fairy-tale promotion that the reality never came close to living up to, and then shoved with brute corporate force down too many throats.

As a result, now you have more and more people across every walk of society pushed into cleaning up the excesses of a product they never wanted in the first place, being forced to share their communities AND energy bills with datacenters, depleted water reserves, privacy violations, EXCESSIVE copyright violations and theft of creative property, having to seek non-AI operating systems just to avoid it . . . right down to the subject of this thread, the corruption of even the most basic video search.

Can LLMs figure out how to override an angry mob, or resolve a situation wherein the vast majority of the masses are against the current iteration of AI even though the makers of it need us all to be avid, ignorant consumers of AI for it to succeed? Because that’s where we’re going, and we’re already farther down that road than the makers ever foresaw, apparently having no idea just how thin the appeal is getting on the ground for the rest of us.

So yeah, I could be wrong, and you might be right. But at this point, unless something very significant changes, I’d put money on you being mostly wrong.

Even more efficient: humans do the specs and the implementation. AI has nothing to contribute to specs, and is worse at implementation than an experienced human. The process you describe, with current AIs, offers no advantages.

AI can write boilerplate code and implement simple small-scale features when given very clear and specific requests, sometimes. It’s basically an assistant to type out stuff you know exactly how to do and review. It can also make suggestions, which are sometimes informative and often wrong.

If the AI were a member of my team it would be that dodgy developer whose work you never trust without everyone else spending a lot of time holding their hand, to the point where you wish you had just done it yourself.

Have you used any AI to try and get it to do something? It learns generally, not specifically. So you give it instructions and then it goes, “How about this?” You tell it that it’s not quite right and to fix these things and it goes off on a completely different tangent in other areas. It’s like working with an 8 year old who has access to the greatest stuff around.

It doesn’t even actually learn, though.

Fractional CTO: Some small companies benefit from the senior experience of these kinds of executives but don’t have the money or the need to hire one full time. A fraction of the time they are C suite for various companies.

Sooo… he works multiple part-time jobs?

Weird how a forced technique of the ultra-poor is showing up here.

It’s more like the MSP IT style of business. There are clients that consult you for your experience or that you spend a contracted amount of time with and then you bill them for your time as a service. You aren’t an employee of theirs.

Or he’s some deputy assistant vice president or something.

Deputy assistant to the vice president

I cannot understand and debug code written by AI. But I also cannot understand and debug code written by me.

Let’s just call it even.

At least you can blame yourself for your own shitty code, which hopefully will never attempt to “accidentally” erase the entire project

I don’t know how that happens, I regularly use Claude code and it’s constantly reminding me to push to git.

As an experiment I asked Claude to manage my git commits, it wrote the messages, kept a log, archived excess documentation, and worked really well for about 2 weeks. Then, as the project got larger, the commit process was taking longer and longer to execute. I finally pulled the plug when the automated commit process - which had performed flawlessly for dozens of commits and archives, accidentally irretrievably lost a batch of work - messed up the archive process and deleted it without archiving it first, didn’t commit it either.

AI/LLM workflows are non-deterministic. This means: they make mistakes. If you want something reliable, scalable, repeatable, have the AI write you code to do it deterministically as a tool, not as a workflow. Of course, deterministic tools can’t do things like summarize the content of a commit.

The longer the project the more stupid Claude gets. I’ve seen it both in chat, and in Claude code, and Claude explains the situation quite well:

Increased cognitive load: Longer projects have more state to track - more files, more interconnected components, more conventions established earlier. Each decision I make needs to consider all of this, and the probability of overlooking something increases with complexity.

Git specifically: For git operations, the problem is even worse because git state is highly sequential - each operation depends on the exact current state of the repository. If I lose track of what branch we’re on, what’s been committed, or what files exist, I’ll give incorrect commands.

Anything I do with Claude. I will split into different chats, I won’t give it access to git but I will provide it an updated repository via Repomix. I get much better results because of that.

Yeah, context management is one big key. The “compacting conversation” hack is a good one, you can continue conversations indefinitely, but after each compact it will throw away some context that you thought was valuable.

The best explanation I have heard for the current limitations is that there is a “context sweet spot” for Opus 4.5 that’s somewhere short of 200,000 tokens. As your context window gets filled above 100,000 tokens, at some point you’re at “optimal understanding” of whatever is in there, then as you continue on toward 200,000 tokens the hallucinations start to increase. As a hack, they “compact the conversation” and throw out less useful tokens getting you back to the “essential core” of what you were discussing before, so you can continue to feed it new prompts and get new reactions with a lower hallucination rate, but with that lower hallucination rate also comes a lower comprehension of what you said before the compacting event(s).

Some describe an aspect of this as the “lost in the middle” phenomenon since the compacting event tends to hang on to the very beginning and very end of the context window more aggressively than the middle, so more “middle of the window” content gets dropped during a compacting event.

I also cannot understand and debug code written by me.

So much this. I look back at stuff I wrote 10 years ago and shake my head, console myself that “we were on a really aggressive schedule.” At least in my mind I can do better, in practice the stuff has got to ship eventually and what ships is almost never what I would call perfect, or even ideal.

To quote your quote:

I got the product launched. It worked. I was proud of what I’d created. Then came the moment that validated every concern in that MIT study: I needed to make a small change and realized I wasn’t confident I could do it. My own product, built under my direction, and I’d lost confidence in my ability to modify it.

I think the author just independently rediscovered “middle management”. Indeed, when you delegate the gruntwork under your responsibility, those same people are who you go to when addressing bugs and new requirements. It’s not on you to effect repairs: it’s on your team. I am Jack’s complete lack of surprise. The idea that relying on AI to do nuanced work like this and arrive at the exact correct answer to the problem, is naive at best. I’d be sweating too.

The problem though (with AI compared to humans): The human team learns, i.e. at some point they probably know what the mistake was and avoids doing it again. AI instead of humans: well maybe the next or different model will fix it maybe…

And what is very clear to me after trying to use these models, the larger the code-base the worse the AI gets, to the point of not helping at all or even being destructive. Apart from dissecting small isolatable pieces of independent code (i.e. keep the context small for the AI).

Humans likely get slower with a larger code-base, but they (usually) don’t arrive at a point where they can’t progress any further.

Humans likely get slower with a larger code-base, but they (usually) don’t arrive at a point where they can’t progress any further.

Notable exceptions like: https://peimpact.com/the-denver-international-airport-automated-baggage-handling-system/

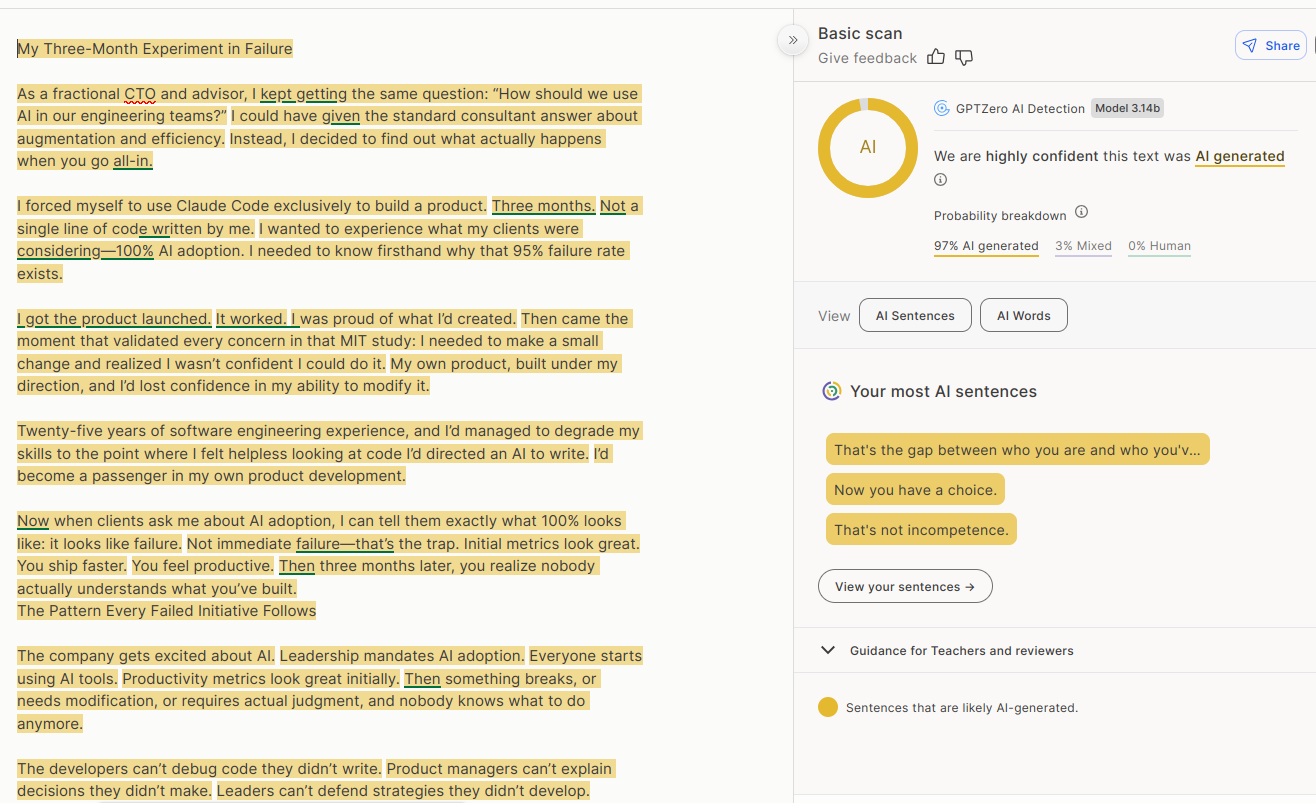

FYI this article is written with a LLM.

Don’t believe a story just because it confirms your view!

I’ve heard that these tools aren’t 100% accurate, but your last point is valid.

I agree but look at that third paragraph, it has the dash that nobody ever uses. Tell tale signs right there

Sure, but plenty of journalists use the em-dash. That’s where LLMs got it from originally. It alone is not a signature of LLM use in journalistic articles (I’m not calling this CTO guy a journalist, to be clear)

Context is everything. In publishing it’s standard; in online forums it’s either needlessly pretentious or AI and either way they deserve to be called out.

When I mean “nobody uses it” I mean nobody other than people getting paid writing for a living would use it. This tech bro would not use that em dash and the quotation marks you can’t also find on the keyboard.

GPTZero is 99% accurate.

I mean… has anyone other than the company that made the tool said so? Like from a third party? I don’t trust that they’re not just advertising.

The answer to that is literally in the first sentence of the body of the article I linked to.

Ai says Ai correction tool about how crappy Ai is at coding’s article is 99 percent chance of being Ai, results generated by Ai. . .

Aren’t these LLM detectors super inaccurate?

@[email protected] @[email protected]

This!

Also, the irony: those are AI tools used by anti-AI people who use AI to try and (roughly) determine if a content is AI, by reading the output of an AI. Even worse: as far as I know, they’re paid tools (at least every tool I saw in this regard required subscription), so Anti-AI people pay for an AI in order to (supposedly) detect AI slop. Truly “AI-rony”, pun intended.

https://gptzero.me/ is free, give it a try. Generate some slop in ChatGPT and copy and paste it in.

@[email protected] @[email protected]

Thanks, didn’t know about that one. It seems interesting (but limited, according to their “Pricing” ; every time a tool has a “pricing” menu item, betcha they’ll either be anything but gratis or extremely limited in their “free tier”), I created an account and I’ll soon try it with some of the occult poetry I use to write. I’m ND so I’m fully aware of how my texts often sound like AI slop.

I’ve tested lots and lots of different ones. GPTZero is really good.

If you read the article again, with a critical perspective, I think it will be obvious.

Yes, but also the opposite. Don’t discount a valid point just because it was formulated using an LLM.

The story was invented so people would subscribe to his substack, which exists to promote his company.

We’re being manipulated into sharing made-up rage-bait in order to put money in his pocket.

Lol the irony… You’re doing literally the exact same thing by trusting that site because it confirms your view

“fractional CTO”(no clue what that means, don’t ask me)

For those who were also interested to find out this means: Consultant and advisor in a part time role, paid to make decisions that would usually fall under the scope of a CTO, but for smaller companies who can’t afford a full-time experienced CTO

That sounds awful. You get someone who doesn’t really know the company or product, they take a bunch of decisions that fundamentally affect how you work, and then they’re gone.

… actually, that sounds exactly like any other company.

deleted by creator

Ive worked with a fractional CISO. He was scattered, but was insanly useful about setting roadmaps, writting procedure/docs, working audits and correcting us moving in bad cybersecurity directions.

Fractional is way better than none.

That’s more what a consultant is. A “Fractional C[insert function here]O is permanent or at least long-term. It just means the firm doesn’t have the resources and need for a full-time executive in that role. I’ve worked with fractional CTO, CIO, CFO, and CMO executives at different companies and they’ve all been required to have the company, industry, market, etc. knowledge that a non-fractional employee would. Honestly, this concept has been wonderful for small to midsize companies.

@[email protected] @[email protected]

I used to deal with programming since I was 9 y.o., with my professional career in DevOps starting several years later, in 2013. I dealt with lots of other’s code, legacy code, very shitty code (especially done by my “managers” who cosplayed as programmers), and tons of technical debts.

Even though I’m quite of a LLM power-user (because I’m a person devoid of other humans in my daily existence), I never relied on LLMs to “create” my code: rather, what I did a lot was tinkering with different LLMs to “analyze” my own code that I wrote myself, both to experiment with their limits (e.g.: I wrote a lot of cryptic, code-golf one-liners and fed it to the LLMs in order to test their ability to “connect the dots” on whatever was happening behind the cryptic syntax) and to try and use them as a pair of external eyes beyond mine (due to their ability to “connect the dots”, and by that I mean their ability, as fancy Markov chains, to relate tokens to other tokens with similar semantic proximity).

I did test them (especially Claude/Sonnet) for their “ability” to output code, not intending to use the code because I’m better off writing my own thing, but you likely know the maxim, one can’t criticize what they don’t know. And I tried to know them so I could criticize them. To me, the code is… pretty readable. Definitely awful code, but readable nonetheless.

So, when the person says…

The developers can’t debug code they didn’t write.

…even though they argue they have more than 25 years of experience, it feels to me like they don’t.

One thing is saying “developers find it pretty annoying to debug code they didn’t write”, a statement that I’d totally agree! It’s awful to try to debug other’s (human or otherwise) code, because you need to try to put yourself on their shoes without knowing how their shoes are… But it’s doable, especially by people who deal with programming logic since their childhood.

Saying “developers can’t debug code they didn’t write”, to me, seems like a layperson who doesn’t belong to the field of Computer Science, doesn’t like programming, and/or only pursued a “software engineer” career purely because of money/capitalistic mindset. Either way, if a developer can’t debug other’s code, sorry to say, but they’re not developers!

Don’t take me wrong: I’m not intending to be prideful or pretending to be awesome, this is beyond my person, I’m nothing, I’m no one. I abandoned my career, because I hate the way the technology is growing more and more enshittified. Working as a programmer for capitalistic purposes ended up depleting the joy I used to have back when I coded in a daily basis. I’m not on the “job market” anymore, so what I’m saying is based on more than 10 years of former professional experience. And my experience says: a developer that can’t put themselves into at least trying to understand the worst code out there can’t call themselves a developer, full stop.

An LLM can generate code like an intern getting ahead of their skis. If you let it generate enough code, it will do some gnarly stuff.

Another facet is the nature of mistakes it makes. After years of reviewing human code, I have this tendency to take some things for granted, certain sorts of things a human would just obviously get right and I tend not to think about it. AI mistakes are frequently in areas my brain has learned to gloss over and take on faith that the developer probably didn’t screw that part up.

AI generally generates the same sorts of code that I hate to encounter when humans write, and debugging it is a slog. Lots of repeated code, not well factored. You would assume of the same exact thing is fine in many places, you’d have a common function with common behavior, but no, AI repeated itself and didn’t always get consistent behavior out of identical requirements.

His statement is perhaps an over simplification, but I get it. Fixing code like that is sometimes more trouble than just doing it yourself from the onset.

Now I can see the value in generating code in digestible pieces, discarding when the LLM gets oddly verbose for simple function, or when it gets it wrong, or if you can tell by looking you’d hate to debug that code. But the code generation can just be a huge mess and if you did a large project exclusively through prompting, I could see the end result being just a hopeless mess.v frankly surprised he could even declare an initial “success”, but it was probably “tutorial ware” which would be ripe fodder for the code generators.

I found the article interesting, but I agree with you. Good programmers have to and can debug other people’s code. But, to be fair, there are also a lot of bad programmers, and a lot that can’t debug for shit…

@[email protected] @[email protected]

Often, those are developers who “specialized” in one or two programming languages, without specializing in computer/programming logic.

I used to repeat a personal saying across job interviews: “A good programmer knows a programming language. An excellent programmer knows programming logic”. IT positions often require a dev to have a specific language/framework in their portfolio (with Rust being the Current Thing™ now) and they reject people who have vast experience across several languages/frameworks but the one required, as if these people weren’t able to learn the specific language/framework they require.

Languages and framework differ on syntax, namings, paradigms, sometimes they’re extremely different from other common languages (such as (Lisp (parenthetic-hell)), or

.asciz "Assembly-x86_64"), but they all talk to the same computer logic under the hood. Once a dev becomes fluent in bitwise logic (or, even better, they become so fluent in talking with computers that they can say41 53 43 49 49 20 63 6f 64 65without tools, as if it were English), it’s just a matter of accustoming oneself to the specific syntax and naming conventions from a given language.Back when I was enrolled in college, I lost count of how many colleagues struggled with the entire course as soon as they were faced by Data Structure classes, binary trees, linked lists, queues, stacks… And Linear Programming, maximization and minimization, data fitness… To the majority of my colleagues, those classes were painful, especially because the teachers were somewhat rigid.

And this sentiment echoes across the companies and corps. Corps (especially the wannabe-programmer managers) don’t want to deal with computers, they want to deal with consumers and their sweet money, but a civil engineer and their masons can’t possibly build a house without willing to deal with a blueprint and the physics of building materials. This is part of the root of this whole problem.

The hard thing about debugging other people’s code is understanding what they’re trying to do. Once you’ve figured that out it’s just like debugging your own code. But not all developers stick to good patterns, good conventions or good documentation, and that’s when you can spend a long time figuring out their intention. Until you’ve got that, you don’t know what’s a bug.

That feels like the trap here. There is no intention, just patterns.

When the cost to generate new code has become so cheap,and the cost of devs maintaining code they didn’t write gets higher. There’s a huge shift happening to just throw out the code and regenerate it instead. Next year will be the find out phase, where the massive decline in code quality catches up with big projects.

where the massive decline in code quality catches up with big projects.

That’s going to depend, as always, on how the projects are managed.

LLMs don’t “get it right” on the first pass, ever in my experience - at least for anything of non-trivial complexity. But, their power is that they’re right more than half of the time AND when they can be told they are wrong (whether by a compiler, or a syntax nanny tool, or a human tester) AND then they can try again, and again as long as necessary to get to a final state of “right,” as defined by their operators.

The trick, as always, is getting the managers to allow the developers to keep polishing the AI (or human developer’s) output until it’s actually good enough to ship.

The question is: which will take longer, which will require more developer “head count” during that time to get it right - or at least good enough for business?

I feel like the answers all depend on the particular scenarios - some places some applications current state of the art AI can deliver that “good enough” product that we have always had with lower developer head count and/or shorter delivery cycles. Other organizations with other product types, it will certainly take longer / more budget.

However, the needle is off 0, there are some places where it really does help, a lot. The other thing I have seen over the past 12 months: it’s improving rapidly.

Will that needle ever pass 90% of all software development benefitting from LLM agent application? I doubt it. In my outlook, I see that needle passing +50% in the near future - but not being there quite yet.

Given the stochastic nature of LLMs and the pseudo-darwinian nature of their training process, I sometimes wonder if geneticists wouldn’t be more suited to interpreting LLM output than programmers.

@[email protected] @[email protected]

Given how it’s very akin to dynamic and chaotic systems (e.g. double pendulum, whose initial position, mass and length rules the movement of the pendulum, very similar to how the initial seed and input rule the output of generative AIs) due to the insurmountable amount of physically intertwined factors and the possibility of generalizing the system in mathematical, differential terms, I’d say that the more fit would be a physicist. Or a mathematician. lol

As always, relevant xkcd: https://xkcd.com/435/

AI is really great for small apps. I’ve saved so many hours over weekends that would otherwise be spent coding a small thing I need a few times whereas now I can get an AI to spit it out for me.

But anything big and it’s fucking stupid, it cannot track large projects at all.

What kind of small things have you vibed out that you needed?

Encryption, login systems and pricing algorithms. Just the small annoying things /s

I’m curious about that too since you can “create” most small applications with a few lines of Bash, pipes, and all the available tools on Linux.

Maybe they don’t run Linux. 🤭

Perverts!

Depends on how demanding you are about your application deployment and finishing.

Do you want that running on an embedded system with specific display hardware?

Do you want that output styled a certain way?

AI/LLM are getting pretty good at taking those few lines of Bash, pipes and other tools’ concepts, translating them to a Rust, or C++, or Python, or what have you app and running them in very specific environments. I have been shocked at how quickly and well Claude Sonnet styled an interface for me, based on a cell phone snap shot of a screen that I gave it with the prompt “style the interface like this.”

FWIW that’s a good question but IMHO the better question is :

What kind of small things have you vibed out that you needed that didn’t actually exist or at least you couldn’t find after a 5min search on open source forges like CodeBerg, Gitblab, Github, etc?

Because making something quick that kind of works is nice… but why even do so in the first place if it’s already out there, maybe maintained but at least tested?

Since you put such emphasis on “better”: I’d still like to have an answer to the one I posed.

Yours would be a reasonable follow-up question if we noticed that their vibed projects are utilities already available in the ecosystem. 👍

Sure, you’re right, I just worry (maybe needlessly) about people re-inventing the wheel because it’s “easier” than searching without properly understand the cost of the entire process.

Very valid!

people re-inventing the wheel because it’s “easier” than searching without properly understand the cost of the entire process.

A good LLM will do a web search first and copy its answer from there…

@MangoCats @utopiah exactly this… i did some small stuff out lf pastoring llms, but first searched for what I need, usually I find a small repo that kind of do what I want, then I clone it, change it a but using help of llm and if i think it is usefull I open a PR and let the mantainer decide if its good or not

What if I can find it but it’s either shit or bloated for my needs?

Open an issue to explain why it’s not enough for you? If you can make a PR for it that actually implements the things you need, do it?

My point to say everything is already out there and perfectly fits your need, only that a LOT is already out there. If all re-invent the wheel in our own corner it’s basically impossible to learn from each other.

These are the principles I follow:

https://indieweb.org/make_what_you_need

https://indieweb.org/use_what_you_make

I don’t have time to argue with FOSS creators to get my stuff in their projects, nor do I have the energy to maintain a personal fork of someone else’s work.

It’s much faster for me to start up Claude and code a very bespoke system just for my needs.

I don’t like web UIs nor do I want to run stuff in a Docker container. I just want a scriptable CLI application.

Like I just did a subtitle translation tool in 2-3 nights that produces much better quality than any of the ready made solutions I found on GitHub. One of which was an *arr stack web monstrosity and the other was a GUI application.

Neither did what I needed in the level of quality I want, so I made my own. One I can automate like I want and have running on my own server.

I don’t have time to argue with FOSS creators to get my stuff in their projects

So much this. Over the years I have found various issues in FOSS and “done the right thing” submitting patches formatted just so into their own peculiar tracking systems according to all their own peculiar style and traditions, only to have the patches rejected for all kinds of arbitrary reasons - to which I say: “fine, I don’t really want our commercial competitors to have this anyway, I was just trying to be a good citizen in the community. I’ve done my part, you just go on publishing buggy junk - that’s fine.”

So the claim is it’s easier to Claudge a whole new app than to make a personal fork of one that works? Sounds unlikely.

Depends entirely on the app.

Depends on the “app”.

A full ass Lemmy client? Nope.

A subtitle translator or a RSS feed hydrator or a similar single task “app”? Easily and I’ve done it many times already.

There have been some articles published positing that AI coding tools spell the end for FOSS because everybody is just going to do stuff independently and don’t need to share with each other anymore to get things done.

I think those articles are short sighted, and missing the real phenomenon that the FOSS community needs each other now more than ever in order to tame the LLMs into being able to write stories more interesting than “See Spot run.” and the equivalent in software projects.

And if the maintainer doesn’t agree to merge your changes, what to you do then?

You have to build your own project, where you get to decide what gets added and what doesn’t.

making something quick that kind of works is nice… but why even do so in the first place if it’s already out there, maybe maintained but at least tested?

In a sense, this is what LLMs are doing for you: regurgitating stuff that’s already out there. But… they are “bright” enough to remix the various bits into custom solutions. So there might already be a NWS API access app example, and a Waveshare display example, and so on, but there’s not a specific example that codes up a local weather display for the time period and parameters you want to see (like, temperature and precipitation every 15 minutes for the next 12 hours at a specific location) on the particular display you have. Oh, and would you rather build that in C++ instead of Python? Yeah, LLMs are actually pretty good at remixing little stuff like that into things you’re not going to find exact examples of ready to your spec.

I built a MAL clone using AI, nearly 700 commits of AI. Obviously I was responsible for the quality of the output and reviewing and testing that it all works as expected, and leading it in the right direction when going down the wrong path, but it wrote all of the code for me.

There are other MAL clones out there, but none of them do everything I wanted, so that’s why I built my own project. It started off as an inside joke with a friend, and eventually materialized as an actual production-ready project. It’s limited more by design of the fact that it relies on database imports and delta edits rather than the fact that it was written by AI, because that’s just the nature of how data for these types of things tend to work.

So if it can be vibe coded, it’s pretty much certainly already a “thing”, but with some awkwardness.

Maybe what you need is a combination of two utilities, maybe the interface is very awkward for your use case, maybe you have to make a tiny compromise because it doesn’t quite match.

Maybe you want a little utility to do stuff with media. Now you could navigate your way through ffmpeg and mkvextract, which together handles what you want, with some scripting to keep you from having to remember the specific way to do things in the myriad of stuff those utilities do. An LLM could probably knock that script out for you quickly without having to delve too deeply into the documentation for the projects.

I’ll put it this way: LLMs have been getting pretty good at translation over the past 20 years. Sure, human translators still look down their noses at “automated translations” but, in the real world, an automated translation gets the job done well enough most of the time.

LLMs are also pretty good at translating code, say from C++ to Rust. Not million line code bases, but the little concepts they can do pretty well.

On a completely different tack, I’ve been pretty happy with LLM generated parsers. Like: I’ve got 1000 log files here, and I want to know how many times these lines appear. You’ve got grep for that. But, write me a utility that finds all occurrences of these lines, reads the time stamps, and then searches for any occurrences of these other lines within +/- 1 minute of the first ones… grep can’t really do that, but a 5 minute vibe coded parser can.

If I understand correctly then this means mostly adapting the interface?

It’s certainly a use case that LLM has a decent shot at.

Of course, having said that I gave it a spin with Gemini 3 and it just hallucinated a bunch of crap that doesn’t exist instead of properly identifying capable libraries or frontending media tools…

But in principle and upon occasion it can take care of little convenience utilities/functions like that. I continue to have no idea though why some people seem to claim to be able to ‘vibe code’ up anything of significance, even as I thought I was giving it an easy hit it completely screwed it up…

Having used both Gemini and Claude… I use Gemini when I need to quickly find something I don’t want to waste time searching for, or I need a recipe found and then modified to fit what I have on hand.

Everytime I used Gemini for coding has ended in failure. It constantly forgets things, forgets what version of a package you’re using so it tells you to do something that is deprecated, it was hell. I had to hold its hand the entire time and talk to it like it’s a stupid child.

Claude just works. I use Claude for so many things both chat and API. I didn’t care for AI until I tried Claude. There’s a whole whack of novels by a Russian author I like but they stopped translating the series. Claude vibe coded an app to read the Russian ebooks, translate them by chapter in a way that prevented context bleed. I can read any book in any language for about $2.50 in API tokens.

I’ve been using Claude to mediocre results, so this time I used Gemini 3 because everyone in my company is screaming “this time it works, trust us bro”. Claude has not been working so great for me for my day job either.

I tried using Gemini 3 for OpenSCAD, and it couldn’t slice a solid properly to save its life, I gave up on it after about 6 attempts to put a 3:12 slope shed roof on four walls. Same job in Opus 4.5 and I’ve got a very nicely styled 600 square foot floor plan with radiused 3D concrete printed walls, windows, doors, shed roof with 1’ overhang, and a python script that translates the .scad to a good looking .svg 2D floorplan.

I’m sure Gemini 3 is good for other things, but Opus 4.5 makes it look infantile in 3D modeling.

Not OP but I made a little menu thing for launching VMs and a script for grabbing trailers for downloaded movies that reads the name of the folder, finds the trailer and uses yt-dlp to grab it, puts it in the folder and renames it.

Definitely sounds like a tiny shell script but yeah, I guess it’s seconds with an agent rather than a few minutes with manual coding 👍

Yeah pretty much! TBH for the first one there are already things online that can do that, I just wanted to test how the AI would do so I gave it a simple thing, it worked well and so I kept using it. The second one I wasn’t sure about because it’s a bit copyright-y, but yeah like you say it was just quicker. I wouldn’t use the AI for anything super important, but I figured it’d do for a quick little script that only needs to do one specific thing just for me.

I would need to inspect every line of that shit before using it. I’d be too scared that it would delete my entire library, like that dude who got their entire drive erased by Google Antigravity…

Yeah that’s fair. And mine were pretty small scripts so easy enough to check, and I keep proper backups and whatnot so no big deal. But like I say I wouldn’t use it for anything big or important.

It never seconds. The first three versions will don’t do what you want (or not work at all), so you will end up arguing with this shit for significant amount of time without realising it

😅

I have a little display on the back of a Raspberry Pi Zero W - it recoded that display software to refresh 5x faster, and it updated the content source to move from Meteomatics (who just discontinued their free API) to the National Weather Service.

I made a tool to hook into a sites api and get me data each week.

Would’ve taken me a few hours to make the gui and learn the api/libraries, took Gemini 10 minutes with me only needing to give it two error outputs.

Neat, that’s cool.

I don’t really agree, I think that’s kind of a problem with approaching it. I’ve built some pretty large projects with AI, but the thing is, you have to approach it the same way you should be approaching larger projects to begin with - you need to break it down into smaller steps/parts.

You don’t tell it “build me an entire project that does X, Y, Z, and A, B, C”, you have to tackle it one part at a time.

They never actually say what “product” do they make, it’s always “shipped product” like they’re fucking amazon warehouse. I suspect because it’s some trivial webpage that takes an afternoon for a student to ship up, that they spent three days arguing with an autocomplete to shit out.

Cloudflare, AWS, and other recent major service outages are what come to mind re: AI code. I’ve no doubt it is getting forced into critical infrastructure without proper diligence.

Humans are prone to error so imagine the errors our digital progeny are capable of!

Computers are too powerful and too cheap. Bring back COBOL, painfully expensive CPU time, and some sort of basic knowledge of what’s actually going on.

Pain for everyone!

Yeah I think around the Pentium 200mhz point was the sweet spot. Powerful enough to do a lot of things, but not so powerful that software can be as inefficient and wasteful as it is today.

I share a similar sentiment, but I’d place the turning point somewhere between 1 and 2 GHz.

Be careful what you wish for, with RAM prices soaring owning a home computer might become less of an option. Luckily we can get a subscription for computing power easily!

I built a new PC early October, literally 2 weeks later RAM prices went nuts… so glad I pulled the trigger when I did